Career

-

October 2020 - Present

Co-Founder and CEO

Omnata was founded to be the operational part of the modern data stack, and make traditional middleware redundant.

Omnata provides direct, native integrations between cloud data warehouses and enterprise cloud applications.

-

COVID-19 pandemic

A strange and difficult year for the whole world, as a global pandemic forced many businesses to either hibernate, close down, or figure out how to work remotely.

-

January 2020 - October 2020

Head of Technology

Honeysuckle Health, a joint venture between Cigna (NYSE:CI) and nib (ASX:NHF),is a specialist healthcare data science and service company with the specific purpose of delivering better health outcomes for members and communities generally. It does this by understanding their current and future health needs, helping them to prevent and manage disease risk and make better treatment decisions.

-

Numenta

Had the opportunity to visit the Numenta office in San Mateo for a meetup event, meet the team in person, and talk about brains.

-

Data Sharing

Performed a Proof of Concept of data sharing in the private health insurance industry, involving the setup of a peer to peer data consortium. Spoke at the inaugural Snowflake Summit on Secure Data Sharing.

-

Technical writing

Wrote some Medium articles on some of my more advanced uses of Snowflake, including machine learning, feature engineering, intelligent computing and bootstrapping.

-

Snowflake

Began using Snowflake as a cloud data warehouse. Built Active Directory integration, change management, and grant management scripts along the way.

-

Data Streams and Lakes

Continued to build out nib’s data infrastructure, including data streaming, a data lake and processing at scale

-

BigData processing

Familiarised myself with the common Apache BigData projects so that I could scale out analytics workloads and analyse streaming data. Focused on Hadoop MapReduce, Spark, Hive and Kafka.

-

Newcastle Futurists Meetup

Co-founded a meetup in Newcastle for futurists.

-

Hierarchical Temporal Memory

12 years after its release, I discovered and read On Intelligence by Jeff Hawkins. It outlined some basic principles about how intelligence arises in the neocortex, and solidified a feeling I had after a decade-long interest in machine learning; that the field is not on the path toward Artificial General Intelligence.

I plan to learn as much as I can about the neocortex, and will follow Jeff’s research company Numenta very closely.

-

January 2017 - December 2019

Enterprise Architect

Provide architectural guidance on IT initiatives, with a focus on data and API integration.

-

Real time data

Off the back of the data science courses I’d taken, I became interested in real-time data streaming and analytics. I began to comprehend the volume of data available in modern systems, and the potential benefits if properly harnessed.

-

R

Completed a R course that covered data sanitisation and some basic machine learning/statistical analysis. It was nice to get back to some functional programming again, (the vectorised functions make for some nice terse code), but I had similar frustrations to those I had with Python - that the community produces very useful but awfully designed libraries.

-

Python

Completed a Python course that covered data manipulation and sanitisation. I didn’t mind the language overall (like Ruby, hard to optimise thanks to the GIL), I love that there’s only one way to do things.

I found the most attractive and deterring aspect to be the vast number of third party libraries. Attractive because they provide so much functionality for machine learning and data mining. Deterring because they are god-awful APIs, presumably written by subject matter academics rather than software developers.

-

Containers

Moved some things into Docker. Found that for stateless components it allowed for a much simpler deployment pipeline and greatly reduce some of the traditional deployment risks.

-

Populo

Finally launched Populo to the public.

-

May 2015 - Jan 2017

QA / Release Manager

Responsible for software quality assurance and the software delivery pipeline within the Core Applications team.

-

-

Continuous delivery

Championed continuous delivery, and moved many of nib’s core applications toward this model by removing obstacles and modernising the deployment pipeline.

-

Automated testing

Focused a lot on automated testing and various other aspects of software quality. Learned a lot about what works well and what doesn’t over both in the short term and long term.

-

Learned Ruby and Rails

Love the ecosystem - a library for everything, great package dependency management. If I’m honest, I did miss static type checking.

-

November 2014 - May 2015

QA Engineer

Responsible for driving improvement of software delivery practises within the Core Applications team.

-

Github

Convinced a global community of users of a Solarwinds NOC product to migrate their custom monitoring components from an old private sharepoint list to Github.

-

PaaS adventures

Spent some time trying to find a good PaaS stack for Populo, as I didn’t like managing a whole VM just for a simple website. Ran it on Elastic Beanstalk for a while, but found it a bit restrictive and unreliable (frequent restarts). Used Azure Web sites and had a similar experience.

I still feel like PaaS should be the ultimate goal, but the tools don’t seem ready so decided I’ll fall back to IaaS for now.

-

Populo

We had architected the Populo payment model around Paypal adaptive payments, but when we applied for a production account, Paypal advised that they would not support our proposed use of it due to insurance underwriting rules. Another launch date missed, and back to the drawing board for the main customer workflow.

-

Application Security

Decided to do some more practical application security research. Discovered first-hand that many large companies have poor web security practices. Decided to retire before getting into any trouble.

-

Service Desk Software

After surveying the woeful options available for an ITIL service desk/NOC, a colleague and I wrote our own, a .NET web application. It was designed around the actual challenges faced by service desks, and featured:

- Very fast navigation and immediate information, with useful live data on incident queues and volumes

- Visibility and alerting on SLAs for initial and ongoing customer contact

- Automated ticket allocation

- Integration with Microsoft Lync for inbound phone enquiries (route customer directly to current assignee)

-

Data science

Discovered Kaggle, and found that I could apply my machine learning enthusiasm toward the growing field of Data science.

-

Ditched subversion for git

Have never looked back. Not even a slight glance.

-

Populo

Came to the realisation that Populo had become an overengineered mess. In designing the ultimate features, it was so complex I could not even use it anymore. Learned a tough and valuable lessing in MVP.

Stripped out a great many unneccessary features in an effort to make the site usable, and did a design overhaul.

-

Populo

Found that development in Google Web Toolkit got incredibly slow as the project grew, and having it take care of the client-side elements became more of a burden than a help. Meanwhile, other client-side frameworks had matured greatly, and the HTML5 canvas enjoyed widespread compatibility.

So we made the tough decision to cut our losses and re-write the site. Was able to keep a lot of the server-side java components, but scrapped flash completely for HTML/javascript.

-

Microsoft certified

Given my rocky but long-term relationship with Microsoft products, I decided to finally tie the knot and get the MCITP: Enterprise Administrator certification

-

March 2010 - November 2014

Managed IT Services

Held a number of different technical and management roles. Initially an IT consultant and support escalation point, progressing to ICT Manager and then Managed Services architect.

-

Populo

A friend and I started writing an event ticketing web site in our spare time. I decided to use Google Web Toolkit on the promise of not needing to worry about front-end code. Opted for Flash for the seating planner in the absence of a better option.

-

Enterprise java

Found that outside of the neat and pure java I learned at university, there was an unsightly mess called J2EE. Countless XML files, factories for your factories, class annotations, beans, volumes of documentation. Soul-crushing stuff.

-

March 2008 - March 2010

Software Developer

Software developer working on services and applications for the lending industry. Predominately B2B messaging, document manipulation and metadata management on a Java stack.

-

June 2007

Completed B. Computer Science

University of Newcastle

-

Built a compiler

Tokenising, lexing, parsing…for the first time I really knew the mechanics of how some code written in a high-level language ultimately ran on hardware. Finally I understood those commands I’d been required to run to get linux applications to build from source for all those years. Still didn’t understand why linux people like to make you do this.

-

Took a machine learning course

Had a profound impact on my thinking, broadening my conception of what’s possible with computation. Have maintained an interest to this day.

-

Learned an assembly language

Programmed a basic robot to follow a white line. Cool to have done, but will probably never work that close to the hardware.

-

Learned scheme

Another interesting paradigm. The recursion really did reminded me of recursion.

-

Learned prolog

The declarative paradigm took some adjustment but I felt the appeal. The industry was infatuated with OO, so I saw it as just an interesting detour.

-

August 2003 - August 2005

APC Driver, Australian Army Reserve

Joined for part-time work while studying at university, and to try something a bit different. Operated and maintained the M113A1 Armoured Fighting Vehicle. Got yelled at and fired/detonated/drove countless different weapons. Won a marksmanship award.

-

Customers

At work, a farmer brought in a rat-infested C64 that he called “a 83 model that probably just needs a service”

-

Telephony

Expanded my telephony repertoire, this time for inbound calls:

- Wrote a script for a customer with an expensive and timed ISDN connection who needed occasional after-hours VPN access. It received an inbound phonecall and then connected back out to the internet for a time specified by DTMF tones.

- Gaffer-taped an array of analog modems to a desktop PC and set up an direct-dial service for remote workers.

-

Perl

Learned perl. At work we were deploying a lot of multi-purpose linux servers, so I wrote a provisioning script that provided a one-page configuration form and applied it to the machine.

-

March 2002 - March 2004

IT consultant

First job in IT, working in rural NSW providing IT support to businesses and government for a small, 4 person company. Configured Redhat Linux servers for a variety of tasks, including multi-site VPN routing, file server and web proxy.

-

December 2001

Completed Higher School Certificate

Gunnedah High School

-

1998

Installed Red Hat Linux from some PC magazine CD. Was impressed with what it could do, but I was too much into games to run it full time.

-

Early years

Farm work

I grew up near my Grandparents’ farm and did a lot of farm work, and saw some fascinating applications of technology. While land grading parts of the farm for irrigation purposes, my uncle wrote a Turbo Pascal program to calculate a plane of best fit based on the paddock survey data. We had a John Deere 8400 that could self-diagnose just about any operating fault, and could even steer automatically.

-

December 1995

Completed Primary School

Carroll Public School

-

1995

Upgraded to an Intel 80486 PC. Enjoyed countless games during what I consider to be the golden era of PC gaming.

-

1992

My school bought a Macintosh. The only lasting impression I have is the startup sound, and that it ran Prince of Persia.

-

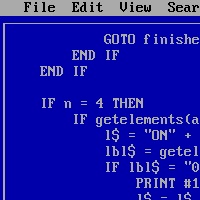

1993

Wrote my first game mod, which involved me editing the source code of QBASIC Gorillas to make the explosions larger. I then decided to write my own maze exploration game. From memory I took a rather crude approach and just used a huge collection of IF statements on the player coordinates to allow or prevent movement and detect the end.

-

1992

We had 2400 baud modem that I used one day to dial a BBS. I remember an ASCII art banner but wasn’t sure what to actually do. I must have left it connected because some time later dad asked me about a $15 phonecall on the bill.

-

1992

Another friend of the family gave us an old Intel 80286 PC. He must have detected some enthusiasm on my part, because he included a copy of the canonical The C Programming Language by Ritchie and Kernighan, and told me it was the bible of computing programming. After he left, I read it cover to cover and then wrote my own merge sort function. Just kidding, I tossed the book aside and spent the afternoon playing F15 Strike Eagle III.

-

1989

Some bearded friend of my parents gave us an old Amstrad CPC, which had a tape unit you loaded programs from. I remember playing a game called Army Moves and my mum grew very tired of the repetitive music.

-